What do you get when you mix artificial intelligence (AI) with Internet of Things (IoT) devices? The answer is Tiny Machine Learning or TinyML. In this post, we look at what TinyML is, and its benefits and provide a few examples of how TinyML is being used today.

TinyML

As you probably already know, Machine Learning uses data and algorithms to enable AI models to learn without direct instruction. It uses algorithms to identify patterns within the data it's fed and then uses that data to create a data model that's capable of making predictions. Machine learning is both how AI is trained and how AI is able to answer your queries.

However, AI typically requires a great deal of computational power to do its thing. To the point that much of the number-crunching is done on remote servers (the cloud), so it's able to run on off-the-shelf devices, like tablets, smartphones, and smart speakers. In other words, AI models are computationally expensive.

At the same time, the market has been flooded with small, low-powered "computers" in recent years. And by that, I mean IoT devices: sensors, security devices, smart appliances, wearables (smartwatches, fitness bracelets), medical devices, etc. These are all computers but with such low power requirements (and hence computing power) that they can run on tiny batteries for many months or even years.

As it stands now, the approach with many of these devices is to collect data from an input and send it to a centralized server, which will perform the ML for the device and send it the output.

But what if we could run the AI locally on these embedded systems without even needing an internet connection?

That's where TinyML comes in.

Tiny Machine Learning (TinyML) is a machine learning technique that leverages reduced and optimized machine learning applications, enabling battery-powered, microcontroller-based embedded devices to perform Machine learning tasks with real-time responsiveness and without connecting to the internet.

TinyML typically uses techniques referred to as pruning and quantization - which essentially simplify the algorithms used - to reduce the computational load, enabling the model to run on a low-powered embedded device.

Examples of TinyML Today

Whether you realize it or not, you likely use TinyML on a daily basis.

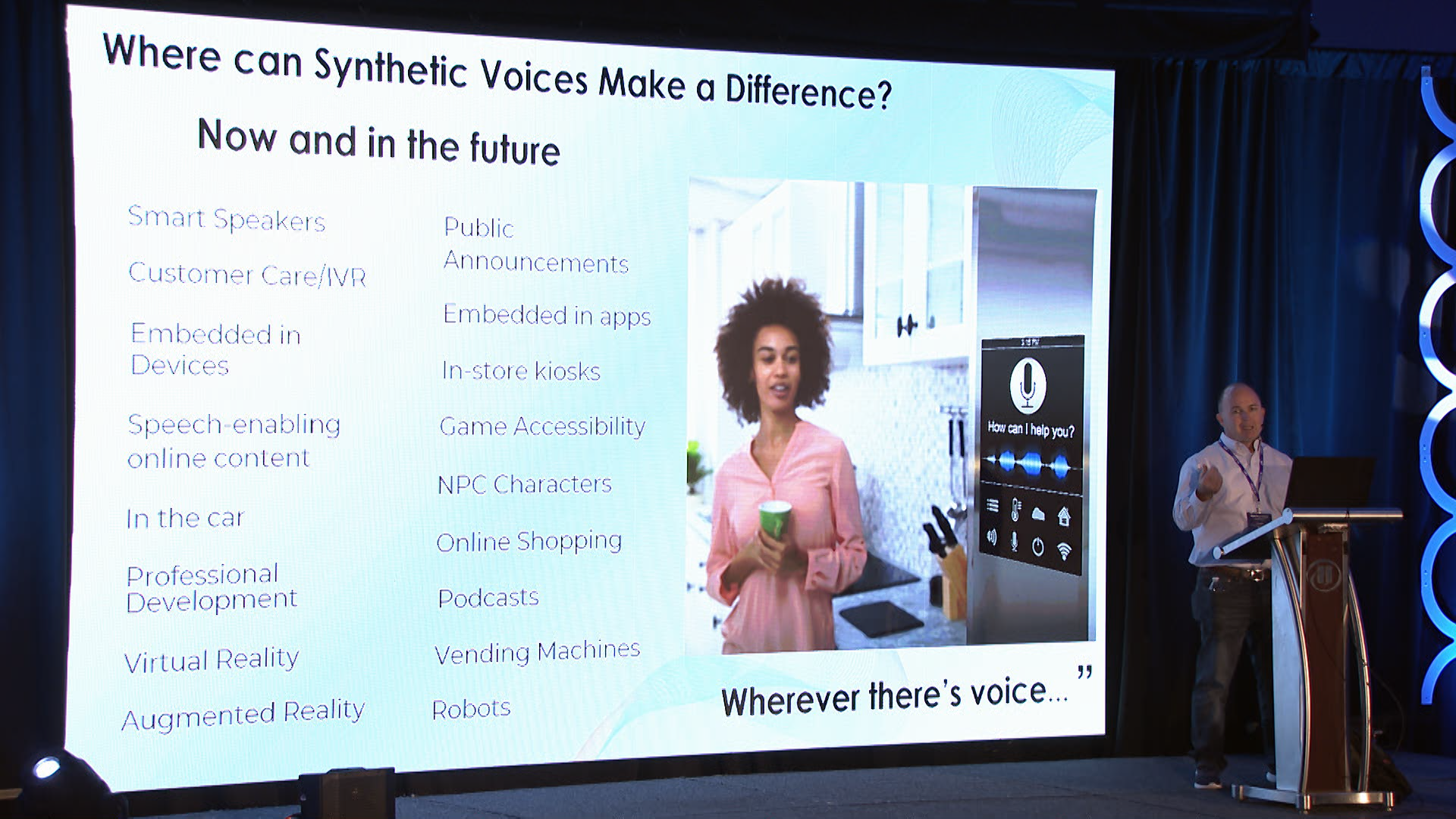

Some common uses for TinyML include:

- Keyword identification

- Gesture recognition

- Audio detection

- Object recognition and classification

An excellent example of a widespread TinyML application is the wake-word detection model used in smartphones. That's what enables the now famous "Hey, Google" or "Hey, Siri" commands.

The above are examples of TinyML today. But the advances in TinyML will eventually mean we can embed AI in almost any device. TinyML epitomizes doing more with less. And it stands to benefit a host of industries - not the least of which is healthcare.

Healthcare today uses many embedded devices for better medical outcomes, from pacemakers to defibrillators - to name just two. Imagine endowing these devices with AI so that they could self-correct or detect anomalies requiring a doctor's intervention. The possibilities go beyond what we're able to imagine today.

A unique example of TinyML in healthcare comes from the Solar Scare Mosquito project. In its effort to curb the spread of mosquito-borne diseases, such as Malaria, Dengue, and the Zika virus, it used TinyML to create a sensor that monitors mosquito breeding. When it detects mosquito-breeding conditions, it agitates the water to prevent them from reproducing. And because it uses solar power, the sensor can run indefinitely.

Benefits of TinyML Over Traditional ML

While they complement each other, TinyML has some benefits that traditional ML lacks (as well as some downsides that don't affect traditional or standard ML).

- Lower Latency: Because the model runs locally on the device, there's no need to send any data to a remote server to number-crunch and produce the output. That reduces the latency of the output.

- Low Power Consumption: Embedded devices use microcontrollers, which consume very little power. That enables them to run on small batteries for extended periods without requiring a charge.

- Low Bandwidth: Many of these embedded devices do not include a networking stack, so they never connect to the internet. But even those that do will use much less bandwidth because TinyML enables low-powered devices to do most of their computations locally. Hence a constant connection to a remote server is not required, which translates to much lower bandwidth usage.

- Enhanced Privacy: Another prime benefit is privacy. The more data AI models get, the better their output. But because TinyML runs locally on your device, your data is either not transmitted at all to a remote server and remains on your device. Or, in the case of devices that still rely on the cloud for certain operations, less data will be sent and less frequently, still providing you with a privacy gain.

Wrap Up

That was a bird's eye view of TinyML. While it may have the word "tiny" in its name, it will arguably be huge. IoT devices went mainstream rather quickly. TinyML is the next evolution of IoT devices, and I would bet that TinyML makes it into most of these devices at a similar pace - if not faster. It will make these devices more powerful and lay the groundwork for next-level IoT experiences across industries.

We predict a big future for TinyML.

About Modev

Modev was founded in 2008 on the simple belief that human connection is vital in the era of digital transformation. Modev believes markets are made. From mobile to voice, Modev has helped develop ecosystems for new waves of technology. Today, Modev produces market-leading events such as VOICE Global, presented by Google Assistant, VOICE Summit the most important voice-tech conference globally, and the Webby award-winning VOICE Talks internet talk show. Modev staff, better known as "Modevators," include community building and transformation experts worldwide. To learn more about Modev, and the breadth of events and ecosystem services offered live, virtually, locally, and nationally - visit modev.com.